About Robert M. Hartranft

Version date 24 November 2016

This material is NOT, and will not be patented nor copyrighted by the authors.

I did my undergraduate work in Engineering Physics at Cornell University. I did my graduate work in Nuclear Engineering at the University of Michigan. I taught for four years at the U.S. Naval Nuclear Power School (NPS). I taught five different courses, of which I partially or fully revised four. I advanced from newly commissioned Ensign to full Lieutenant (O-3; called Captain in the other services) in a brisk three years.

I taught Physics and Advanced Algebra at Lewis S. Mills H.S., Region 10, CT, and was named Best New Teacher, but returned to Engineering for financial reasons.

I have worked in or visited 49 states and 35 countries. I have held Secret, Top Secret, L, and Q-Weapons clearances. I have been shot at and traveled the former USSR with my own fully-armed KGB “minder”. I have managed or significantly contributed to some of this nation’s most successful power projects, including Calvert Cliffs (Maryland) and Palo Verde (Arizona).

I have been at Governor’s House, a nursing home in Simsbury CT, for seven years because of spinal stenosis. I am now serving my fourth term as President of the Residents Council, and have been re-elected for the 2017 term. I am working on about a dozen projects including Grading, Cosmology, and Pyramids.

A Law-Abiding Cosmology Model

A Law-Abiding Cosmology Model

24 November 2016 Simsbury CT 06070

Robert M. Hartranft rmhartranft@gmail.com

Scott W. Hartranft

The Abstract: Suppose that there were initially no physical things – no mass, no energy, no time, no laws of physics, just endless void. But at some point, in the true “creation event”, the laws of physics came into being, including conservation of mass-energy. Suppose further that these laws are truly invariant: from that instant onward, they were everywhere, and always will be, as they are here and now.

The three most fundamental equations for mass ( F = ma ; F = Gm1m2/r2 ; and E = mc2 ) are all symmetric for positive and negative values of m. This suggests a family of negative mass particles ("unmatter"), with zero net mass-energy for the universe overall. As allowed by quantum mechanics, there would then follow random appearances of a positive mass particle and its negative mass unmatter counterpart.

The Big Bang would therefore have required zero net mass-energy, and would have produced two precisely concentric, inter-meshed, expanding spheres, one of positive m matter, the other of negative m unmatter. As the spheres expanded to their current, roughly 28 billion light-year diameters, they became progressively more segregated, leading to apparently huge voids if only one sphere is considered. As segregation further increased, the unbalanced local forces increased, leading to the observed and heretofore exceedingly puzzling accelerating expansion.

The paper: Suppose the laws of physics are truly invariant: the same at every time and place; before, during, and after the Big Bang; inside, outside, and on the boundaries of black holes; etc. No clever contrivances like “Cosmic Inflation” to get the universe expanding. No physics magic like “Dark Energy” to accelerate expansion. The laws of physics were, are, and always will be as they are here and now.

And a further simplification: there were no physical things before the Big Bang – no mass, no energy, just endless void. But at some point, the laws of physics came into being, including conservation of mass-energy. That is to say, the true creation event was the creation of the laws of physics: the Big Bang was simply an allowed event. John 1:1 in the King James Version of the Bible provides an elegant summary:

“In the beginning was the Word, and the Word was with God,

and the Word was God.”

Or in the notation of modern mathematical physics:

Ʃ universe (m) = 0

|

Physical Property |

Positive Components |

Negative Components |

Net for Universe |

|

Electric charge |

(+) charges |

(-) charges |

0 |

|

Magnetic pole |

North poles |

South poles |

0 |

|

Rotation |

Clockwise |

Counter-clockwise |

0 |

|

Mass |

Matter |

“Unmatter” |

0 |

![]()

What cosmology would result?

What follows is the authors’ speculation about such a law-abiding universe. We believe that the model proposed here can be described in finite-element analysis computer code, and then run to explore aspects of cosmology never before susceptible to computer analysis. We hope others will agree and do those analyses.

We begin by noting that the three

most fundamental equations for mass,

We begin by noting that the three

most fundamental equations for mass,

F = ma Inertia

F = Gm1m2/r2 Gravity

E = mc2 Relativity

are all symmetric for positive and negative values of m. This suggests a family of negative mass particles ("unmatter" hereafter), with zero net mass-energy for the universe overall. The positive m matter gravitationally attracts other positive m matter; negative m unmatter gravitationally attracts other unmatter; but positive m matter gravitationally repels negative m unmatter. (The parallel to British physicist P.A.M. Dirac in 1930 is clear and compelling. Dirac made a similar observation about the electromagnetic equations. The discovery of the positron followed quickly, followed by the whole anti-matter family.)

The Big Bang would therefore have required zero net mass-energy, and would have produced two precisely concentric, inter-meshed, expanding spheres, one of positive m matter, the other of negative m unmatter. After expanding at light speed for an hour, each sphere could hold 1081 nucleons, which is one estimate of the number of atoms in the visible universe.

Note that the universe was at this

stage, and had always been, zero net mass-energy, zero net magnetic poles, zero

net rotation, etc. To this instant it would therefore have had zero net

gravitational force and zero net electromagnetic force, just short-range

nuclear forces. In consequence, the universe would have seemed identical

everywhere except at the outermost layer. As expansion continued, however, the

structure would have begun to disassemble at virtually every nucleon

diameter.

Note that the universe was at this

stage, and had always been, zero net mass-energy, zero net magnetic poles, zero

net rotation, etc. To this instant it would therefore have had zero net

gravitational force and zero net electromagnetic force, just short-range

nuclear forces. In consequence, the universe would have seemed identical

everywhere except at the outermost layer. As expansion continued, however, the

structure would have begun to disassemble at virtually every nucleon

diameter.

After 7.2 years, the spheres would have had sufficient volume for 1081 hydrogen atoms. They would have repelled and segregated at myriad local sites, providing the birth areas for an immense number of large, short-lived stars, which in turn provided the evidently immense number of black holes in the universe today. As expansion and matter/unmatter segregation progressed, the heretofore puzzling early cosmic anisotropy and galactic spins naturally appeared.

As the spheres expanded to their current, roughly 28 billion light-year diameters, they became progressively more segregated, leading to apparently huge voids if only one sphere is considered. As segregation further increased, the unbalanced local forces increased, leading to the observed and heretofore exceedingly puzzling accelerating expansion.

Two verification experiments: Looking for locations in a sky map where unphotons from an ungalaxy have “cancelled” the positive energy photon "mist" should work. Such locations would appear as small black dots in the sky map, stable in both time and position.

A direct imaging camera may also work. The detector pixel could be supplied electrons at elevated energy. Any transitions to ground state without photon emission would hopefully be mostly from unphoton absorption. The lens would be just a drilled block of the same material held at the elevated energy.

Conclusion: We note with immense pleasure that extremely clever, but painfully inelegant, special rules like cosmic inflation and dark energy, are simply unnecessary, replaced by a single, invariant set of laws. Like any beautiful lady, Mother Nature values elegance far above cleverness.

About the authors: Both authors are graduates of the Cornell University College of Engineering: Robert in Engineering Physics in 1966, and Scott in Electrical and Computer Engineering in 2001. Robert is Scott’s father. This work was made possible by the tireless support of Dr. Martha Hartranft (Robert’s wife, Scott’s mother).

Footnotes:

Anti-matter vs. unmatter: It is important to understand that ordinary anti-matter is still positive mass. For example, when an electron and an anti-electron (a.k.a. a positron) “annihilate”, they create two 0.51 Mev gamma rays, precisely equivalent (E = mc2) to the sum of the masses of the two particles. By contrast, if an electron and a negative mass unelectron “cancel”, the result is that they simply disappear – which is again equivalent to the sum of the initial masses – zero.

|

|

TYPE |

Positive Mass |

Negative Mass |

|

Normal |

|

|

|

|

Anti-matter |

|

|

|

||||

There are no “worm holes” or other cosmic shortcuts: in this model, the speed of light limitation applies everywhere and always.

Black holes are not singularities in this model, but merely quantum mechanical regions of particularly intense gravity. The laws of physics are the same inside, outside, and at the boundary of a black hole, whether of matter or unmatter.

This model vs. three major miracles. We also note that most current cosmology models require three “major physics miracles”:

1. The creation of an all-positive-mass universe, which is a huge violation of the conservation of mass-energy.

2. “Cosmic inflation” to allow initial expansion despite gravity. And,

3. “Dark Energy”, an unspecified material which somehow causes accelerating expansion in the current era.

Most models also require minor physics miracles foxxxxxx

None of these is necessary in the model proposed here. Even the Creation Event, which here is the creation of the laws of physics, is unopposed.

A variation: extrusion vs. bang. Because this model provides both positive energy matter and negative energy unmatter, it allows another creation sequence: extrusion of both matter and unmatter into a small volume. More later.

Page break follows

Building

pyramids using very long ropes Version date 13 November 2016

Building

pyramids using very long ropes Version date 13 November 2016

Robert M. Hartranft, Consulting Engineer, Simsbury, CT 06070, and

John B. Hartranft, Senior Designer, Flint, MI 48503

For literally thousands of years, people have speculated about how the ancient Egyptians built the pyramids at Giza. Credible speculations have included long ramps, ramps spiraled around the pyramid itself, plus varieties of lever systems and cranes. Other speculations have run from anti-gravity devices to assistance by extraterrestrials. We offer here an engineering speculation and invite comment.

Making rope is a fully "scalable" activity: if you know how to make a 20 foot long rope, you also know how to make a 200 or a 2000 foot long rope. Suppose you ran such a very long rope up one side of a partially completed pyramid, across the top, down the other side, and out to a large pulling team, say with 200 men in the pulling team. Suppose each man could pull 50 pounds force. (This is not very challenging: if facing in the direction of pulling, just lean forward; or better yet, as in a tug of war, turn toward the pyramid and lean backward. An even easier way to sustain this force would be to attach cross boards to the pulling rope, and have the pullers pull it from a nearly sitting position, much like a rowing crew, but with no strain on their arms or backs; Pharaoh's OHSA inspector will be pleased!) However done, there is then a 10,000 pound force pull available at the other end of the rope, which will easily pull an average 5000 pound block up the partially complete side, across the partially complete top, and directly into place, even with a generous allowance for friction. (More realistically, 80 pullers per rope should suffice.)

Suppose the pullers move at 2 feet/second (1.36 miles/hour). The block will then move from the staging area at the base, up the side, and into place in a few minutes. (A representative pull would be 300 feet up the side and 200 feet along the top, consuming less than five minutes.) At most, the men will each be expending (2 feet/second) x (50 pounds force) / (550 foot pounds/sec/horsepower) = 0.18 horsepower, which is sustainable a few minutes at a time. Many pulling teams can work parallel ropes at the same time, so that net emplacement rates of at least one block per minute can be readily achieved. At this emplacement rate, working 10 hour days, 6 days a week, 50 weeks per year (or some comparable ancient Egyptian schedule), each year 180,000 blocks can be pulled into place. Completion of the estimated 2.4 million block structure on a schedule of less than 20 years should then be achievable, even allowing for inevitable delays. Oversize blocks can be emplaced using multiple ropes.

Even allowing for 20 pulling teams, only 1600 to 4000 pullers would be needed. If available, horses or oxen could also be used for pulling. Since the primary work is walking back and forth on the Giza plateau, conditions for personnel need not be murderous: it could be done by ordinary workers or by soldiers between campaigns. Since the same pulling paths could be used year after year, someone would probably decide to install ridged paving blocks to improve traction. Lightweight sun shades and abundant water for evaporative cooling in the summer would probably also appear.

Quick release wooden strongbacks would allow quick engagement of blocks in the staging area and quick release upon emplacement. Direction changes of the ropes at both upper edges of the pyramid and at the far base could be achieved with limited friction by passing them over gently curved, polished granite bearing blocks, probably with water as a lubricant. Friction between the moving block and the partially completed side and top would probably be reduced by pouring water ahead of the moving block, but no special skids or rollers are necessary: the new block would slide directly against the finish blocks on the sides and the lower blocks on the top.

This method requires no exotic technology: the ancient Egyptians clearly had heavy ropes. Nor does it require forgetting anything exotic: merely forgetting a "trick of the trade". Neither is there anything exotic to find: when complete, the very long ropes would simply be cut into shorter lengths and used for other purposes. The method works identically from the base to the capstone.

It seems likely that most of the blocks were quarried and shaped using copper or bronze saws and abrasives in an adjoining limestone quarry. This activity pace is harder to estimate, but it is also clearly achievable using technology they had, and it can also be done in parallel by many teams. If the block preparation process can be done by a comparably sized group, then the overall project should require no more than 8000 men, which is considerably more manageable and economically sustainable than the 100,000 man force sometimes postulated.

Footnotes

1. Due credit to others -- it's another ramp theory: Work in engineering is inevitably based on prior work and experience, and this speculation is no exception: it's another ramp theory. In this case, the side of the pyramid itself is the ramp for the blocks. This avoids the time and material for building separate ramps, ensures that the ramp is always ready for use for a new layer, and avoids the delays inherent in narrow ramps. Short ramps at the base reported by others would be useful for the first few layers, and thereafter could serve to transition blocks from horizontal to the slope of the pyramid side. If the casing blocks are placed during assembly on all four sides, and if the ropes are periodically shifted from side to side, the wear on the casing blocks will be evenly distributed, and will actually serve to polish their outer layer.

Several experts, including Jean-Philippe Lauer and Dr. J.D. Degreef, have proposed pulling from some location on the pyramid remote from the block itself. This is clearly possible, but we believe that pulling from the plateau instead has significant advantages, most notably avoiding needlessly walking up and down the pyramid, allowing simultaneous use of as many pulling teams as desired and of any size desired, and using a physiologically efficient pulling posture. Together with using the pyramid sides as ramps, we believe that the ancient Egyptians would have realized this early in construction. As best we can tell, however, we are the first authors to suggest this particular combination of techniques. We would be interested in seeing any earlier discussions of this combination.

2. Sliding friction of the block: Since limestone is a relatively soft material, any bottom surface irregularity on the sliding block will quickly erode to a smooth finish. Thereafter, there will be smooth limestone sliding against smooth limestone, lubricated by a slurry of limestone dust and water, which is pretty slippery stuff. We plan on experimental verification, but our engineering judgment is that the resulting coefficient of sliding friction will be relatively low.

3. Sliding friction and wear on the rope: Our guess is that the pyramid builders provided a wear layer on the outside of the ropes, possibly as a tightly wrapped spiral of small diameter rope, replaced as needed. A somewhat higher technology alternative to polished granite bearing blocks for rope direction changes would be large wooden or granite pulleys or drums, probably with lubricated copper or bronze sleeve bearings. These would be similar in concept and technology to chariot wheels, which were well known then, although designed and sized for far larger loads. Such pulleys or drums would substantially reduce both friction and rope wear.

4. Power expenditure by pullers: A key element of our proposal is that the pullers just walk back and forth on the Giza plateau, rather than needlessly struggling up and down a ramp themselves. This means that we can estimate their maximum power expenditure fairly closely by looking simply at the sustained power delivered to friction and to elevating the block as it moves up the side of the pyramid. The estimate provided above is a maximum output of 0.18 horsepower for a period of several minutes at a time. (A very brief period of higher force and higher output to get the block moving would not be significant, and indeed, would be further eased by the natural elasticity of a long rope as it takes up tension at the start of a pull.)

To put this in perspective, consider the case of a modern, 180 pound man walking up typical seven inch rise steps at the measured pace of one step a second. (Go try this if it isn't obvious that such a pace is easily sustained; the exercise will do you more good than thinking about it!) Such an individual is doing (180 pounds) x (7/12 foot/step) x (1 step/second) / (550 foot-pounds/second/horsepower) = 0.19 horsepower. In other words, he is working harder than even the maximum required of pyramid block pullers in this model.

In practice, this means that the actual pulling crews could have been smaller, perhaps much smaller depending on the exact friction coefficients, or that assembly could have been even faster. This would certainly have been sorted out early in construction by experience, and thereafter done at an efficient overall pace.

5. Rope return variations: The simplest way to reset the pulling rope for another use would be to have a trailing section behind the new block, and pull the main rope back using a small group of men in the block staging area at the base of the pyramid. We can also envision a variety of other arrangements like staging areas on both sides of the pyramid, with the rope pulling another block up the opposite side as it is returned to its initial position. As with optimal crew size, we expect that the ancient Egyptians would have decided this based on experience early in construction.

THEOLOGICAL

PHYSICS

THEOLOGICAL

PHYSICS

Version date 30 January 2015

In an earlier paper, the authors proposed a model where the universe is composed of precisely equal amounts of positive mass-energy and negative mass-energy, now segregated into two precisely concentric, intermeshed, mutually repulsive, expanding spheres. We offer here a frankly speculative effort to consider the laws of physics using theology.

Physics: The laws of physics appear to be identical everywhere in the universe.

Theology: Monotheism – there is only one Creator.

Physics: In this model, the laws of physics are invariant throughout the entire history of the universe, with no special contrivances like “cosmic inflation” or “dark energy”. This is a profound difference between this model and the currently popular models.

Theology: The Creator is consistent about all things at all times.

Physics: The laws are quantum mechanical rather than Newtonian deterministic.

Theology: Free will exists, together with its necessary companion – evil. Each location in the universe will evolve in a unique manner, no matter how similar initially: there will be unplanned, interesting things to see. If the Creator wishes to change or direct matters at a given location, He can do so in a completely undetectable manner by changing one quantum at a time, or He can make His power evident.

Physics: Nothing can travel faster than the speed of light, and that speed is slow compared to the size of even a single galaxy.

Theology: Local independence is preserved even if an advanced civilization devotes huge resources to communication or transport. (Note that this model has no “worm holes” or other shortcuts.) But for the same reason, virtually the entire history of the universe is readily seen with telescopes: the Creator’s work is on display to all.

Physics: There are myriad planets, but each – including Earth – is unique because of quantum mechanics. Only Earth is truly Earth-like.

Theology: We see nothing in Physics which directly answers “What is man that thou art mindful of him?”, but neither do we see anything which refutes the premise of the question. It seems significant that humans have both the ability and the technology to see and understand the universe.

Physics: As a general pattern, the laws of physics appear to be few, simple, and understandable.

Theology: The Creator means His work to be understood.

Physics: The Totalitarian Principle – “Everything not forbidden is compulsory.” Except for this, an endless perfect void would fulfill all the laws of physics.

Theology: The Creator clearly favored action over inaction, even amidst uncertainty and risking evil.

In this model, the true creation event was the selection of the laws of physics: the Big Bang was simply an allowed occurrence. John 1:1 seems an elegant summary:

“In the beginning was the Word, and the Word was with God, and the Word was God.”

Again, all this is speculation, not rigorous proof. But the pattern is fascinatingly familiar.

This work was made possible by the tireless support of Marty Hartranft (Bob’s wife, Scott’s mother).

Robert Hartranft Scott Hartranft

Simsbury, CT Beaverton, OR

860 841 0258

hartranft.org rmhartranft@gmail.com

INERTIA AND GRAVITY

Version date 10 October 2014

Inertial mass and gravitational mass seem like unrelated properties, but are identical in even extremely precise experiments. We suggest here that inertia is simply self-gravity. We further suggest that the graviton has mass zero.

Suppose a very large physicist decided that the planet Earth should be treated as a particle – the “earthon”. The earthon has very high inertia because of its self-gravity. At the other extreme, a very small physicist, working at Plank scale – 10-35 meters – would have the same conclusion about particles like electrons and quarks, whose immense relative size would cause them to interact with their own gravitons.

In short, inertia is the result of self-gravity, and inertial mass is equal to gravitational mass because they derive from the same process: gravity; self-gravity in one case, external gravity in the other.

Consider now the photon and the postulated graviton. The photon is the carrier of the electromagnetic force, but it has zero electric charge, and does not itself experience the electromagnetic force. (For example, a beam of light can pass through an intense magnetic field with no effect on either the light or the field.)

By analogy, the graviton should have zero net mass, and should not itself experience gravity.

In current models, a zero mass particle has no properties of any kind, and simply does not exist. In our model, however, it can be a composite particle, with equal amounts of positive and negative mass matter. If each part has spin 1, then the graviton is a mass 0, spin 2 particle.

This would provide an intuitively natural basis for gravitation: “source” mass (the sun, for example) would emit an endless series of gravitons in random directions. The emissions would probably occur in pairs to avoid a spin change in the emitting matter. Since the gravitons have zero mass, this continues with no change to the sun, exactly as observed.

We will leave for later a model of the interaction with the “distant” mass (the Earth, for example), except to say that the gravitons must continue in a straight line forever.

This work was made possible by the tireless support of Marty Hartranft (Bob’s wife, Scott’s mother).

Robert Hartranft Scott Hartranft

Simsbury, CT Beaverton, OR

860 841 0258

hartranft.org

On The Famous Crown

Version date 16 October 2016

How many rays emanate from the crown atop Lady Liberty, and what do they represent? The easy part of the answer is seven. Not knowing that value, I had assumed that the rays were somehow tied to the Tired and the Poor.

But that seven is a storied value, picked by the famous colorist Isaac Newton in his precedent-setting reduction of the full spectrum to just

1. Red

1. Red

2. Orange

3. Yellow

4. Green

5. Blue

6. Indigo

7. Violet

So the crowning glory of this icon of America contains a subtle tribute to the greatest mathematician/physicist of all time.

Robert Hartranft

Simsbury, CT hartranft.org

860 841 0258 rmhartranft@gmail.com

Low Hertz Vibration Therapy

Low Hertz Vibration Therapy

Robert M. Hartranft

Simsbury, CT 06070

Version date 7 November 2016

Mechical vibration of the author in the low Hertz range produces remarkably wide-ranging, local and systemic, functionally significant results: eliminated severe edema in both legs; increased local fexibility; increased strength system-wide; increased endurance; improved cognition; increased libido; and even improved visual acuity. After four years of treatment, the effects continue, still sustained even without vibrating for a day, and still free of any apparent side effects.

The vibration is done by a bed “massager”, $72 from Amazon as shown. Initially the individual vibration heads were held in close contact (one to three cloth layers) with my legs using Velcro strips, rather than beneath the mattress. Later, I had one set duct-taped to my bed frame, and another set duct-taped to the frame of my wheelchair. In the current arrangement, there are three vibration heads in each sock, wiuh the seventh in my left hand. Based on experience, some of the connections are now strenghened with duct tape.

The heads are small, eccentric-mass units, a technology which is simple, reliable, and easily modified for amplitude and frequency in the low Hz range: for example, I have used a much larger version on an 800 ton nuclear component.

The most credible explanation I can offer is that the low Hz mechanical vibration may mix the chemicals in the inter-neuron areas, causing a prompt (under one second) increase in the neuron-to-neuron electrical conductivity. Sustained shaking produces a longer term effect (“half life” of perhaps five hours), perhaps caused by increased chemical transport across cell walls.

Design history and considerations: About four years ago, I had a Medtronic Baclofen pump implanted in my abdomen. When it was turned on two weeks later, it started pumping at 50 RPM. In the ”fundamental” frequency mode – one cycle of pump rotation produces one vibration cycle -- that meant

50 Revolutions per Minute / 60 Seconds per Minute

= 0.83 vibrations per second

which by chance, is relatively close to the resonant frequency of my brain, which I estimate to be about 7 Hz. In under a second, I had increased cognition and greater visual acuity. The attending osteopath, Dr. Matthew Raymond, of Southington CT, stated that about one quarter of his pump patients report similar experiences. It took me a week to realize this had to be a mechanical effect. I then showed that I could duplicate or amplify the effect with a simple bed massage shaker.

That unit transmitted enough power down the legs of the bed and into the floor that I felt the effect even sitting in my wheelchair. That allowed a series of planned and accidental experiments demonstrating immediately beneficial effects for a resident with a nearly immobile leg, a resident with a broken hip, and a resident with significant dementia.

Most comparable work has used kilohertz or megahertz electromagnetic stimulation, often introduced using conductors placed deep in the brain. The method here is far quicker, easier, cheaper, and less risky. I believe its effect is similar to that produced by light exercise like walking.

Calculation of payback period: Depending on the specific configuration selected and the methodology utilized, payback is a matter of single-digit days. It is inefficient to figure out how many days: just buy one and try it.

Contact information: Cell and voicemail: 860-841-0258 e-mail: rmhartranft@gmail.com

High School Grades, College Admissions, and Scholarships

Version date 30 October 2016

Robert M. Hartranft

Simsbury CT 06070

|

![]()

![]()

![]()

![]()

c

Summary: Existing high school transcripts do not contain enough information to quantitatively predict a candidate’s performance at a specific college. The easiest improvement is to use the existing grades, but convert the class rank to percentile rank. A further improvement would be to retrieve all the students in all the classes taken by the candidate and recalculate the percentile class rank based on the candidate’s actual competitors. In either event, the basis of the prediction is the use of the mean SAT (M + CR) for the candidate’s high school class rank rather than the candidate’s individual scores. This change allows evaluation of the candidate in a meaningful context.

The problem: At first glance, it seems that a student’s high school grades should be a strong and easily evaluated predictor of that student’s college grades. But there are over 25,000 high schools in the U.S., and grades actually vary by school, by year, by teacher, and by course (a group I will call here the grade “cohort”). There are over 150,000 new cohorts every year, and while local cohort data is well known to each high school, I cannot find a single instance of cohort data being made public, let alone being provided to colleges in admission applications

It is therefore impossible for a college to quantitatively predict a student’s likely performance, and even a good guess (a process some ironically call “gut-ology”) is practical only with well-established high school/college pairs. For the same reason, colleges cannot put all their candidates on a single, quantitatively-sound scale.

Standardized tests like the SAT and the ACT provide nationally consistent results, but are relatively poor predictors of college grades. As is frequently noted, there is a considerable difference between doing well on a multiple choice test Saturday morning and doing well in a nine month long course with many and varied requirements.

In short, the entire college application/admission process relies on information – the high school transcript – which is hard to interpret at best, and frequently misleading.

Some useful tools and patterns: Academic records are now almost universally digitally stored and readily retrieved for analysis. “PowerSchool” – or its competitors – can easily retrieve all the data for all the cohorts where a student has received a grade, allowing calculation of a student’s class rank whenever desired, and with an algorithm which excludes arbitrary premiums for “honors” courses.

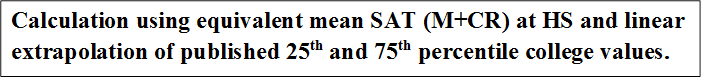

Patterns of class rank vs. SAT are very stable at both high schools and colleges: while individual SAT (Math + Critical Reading) scores show considerable scatter, the best-fit SAT score line barely changes from class to class. The mean SAT (M+CR) of the fiftieth percentile student in a class, for example, is typically within four SAT points of that value for both the preceding and the following class. The shape of the curve is similarly stable, especially at colleges: nearly linear over all but the highest and lowest class ranks.

(This averaging technique is precisely analogous to the method almost universally used in quantum mechanics to find easily observed values by averaging over many quantized values: each student being analogous to a quantum particle like a photon. This made the calculation trivial in my mind, but totally unfamiliar to anyone outside quantum physics: about 99.9% of the population, I would guess. For 16 years, I used the technique unnoted and unexplained, until I finally realized that real people never use or encounter this method.)

Therefore the mean equivalent SAT values for a given percentile class rank can be taken from those of the most recently graduated class at that high school.

Further, most American colleges publish the mean SAT scores for their 25th and 75th percentile first year students, and tables of these values are updated every year. Like the earlier values, these values are typically stable from year to year: about 200 SAT points apart, with only slowly changing median values.

By most reports, a typical college application gets about eight minutes of review. Within those precious 480 seconds, the reviewer must assess both the quantitative information like GPA, and the subjective information like essays and recommendations. Making the quantitative task sound and simple is surely beneficial to all in a process which literally shapes lives, costs the nation many billions of dollars a year, and has so much good and bad potential. This is particularly true as both students and colleges look internationally or nationwide rather than just statewide or regionally.

A proposed solution: The patterns above allow direct prediction of a candidate’s first year class rank at a college by finding the class rank at that college with the same mean SAT as the candidate’s high school class rank: see the graph above. Note that this automatically adjusts for:

1. The academic aptitude of the candidate’s actual high school competitors and potential college competitors.

2. The grading patterns of the specific high school cohorts where the candidate earned grades, and,

3. The grading pattern at the college under consideration.

Voila! Local murk becomes specific college clarity.

Note that this method avoids the need to change either the overall grading pattern at the school or the many cohort patterns.

It is interesting to compare the tracking of potential new students against the (non-) tracking of graduates. Districts understandably work hard to determine exactly who will appear the first day of school, and what each student’s program for that year will be. Parents happily cooperate, since they also want things to go smoothly.

By contrast,

after graduation, most Districts have only casual contact with students, with

little or no quantitative reporting. Their college performance is known merely

as accumulated anecdotes, ignoring the opportunity to measure their high school

preparation by their first year performance in college. This makes it harder

for students at low grading schools like Simsbury High School to gain admission

to colleges attended by their performance peers. And it significantly reduces

their chances for scholarships.

DRAFT — For Illustration Only

SIMSBURY HIGH SCHOOL

PRINTED LETTERHEAD ON 32 LB. BOND PAPER

*** INCLUDE IN APPLICATION TO “SOMEWHERE COLLEGE” ***

|

Student

Student

Susan A. Example

Equivalent Nationally Normed GPA

4.10

Projected First Year Class Rank at

Somewhere College

66th percentile from bottom

Prepared 22 June 2015.

Please see following chart for the basis of these results.

/signed/

Hope R. Eternal

Guidance Counselor